From ChatGPT to Copilot: How Employees Are Creating Hidden AI Security Risks

May 11, 2026

Introduction

Artificial intelligence is no longer emerging technology. It is already embedded inside the modern workplace.

Across the UK, employees are using AI applications such as ChatGPT, Microsoft Copilot, Claude, Gemini, Perplexity, and countless specialist tools to improve productivity, save time, analyse information, draft reports, automate repetitive work, and accelerate decision-making.

For many organisations, this represents an enormous opportunity. Teams can work faster, employees can automate administrative tasks, knowledge workers can produce content in minutes instead of hours, and businesses can gain competitive advantage through operational efficiency.

However, there is another side to this story that many leadership teams, CISOs, and compliance professionals are only beginning to understand.

Your employees are already using AI.

The real question is whether you know how they are using it.

Because while artificial intelligence is driving productivity, it is also creating a hidden security risk inside organisations, often without malicious intent, and frequently without employees even realising they are exposing sensitive information.

The uncomfortable truth is that many businesses have already lost visibility and control.

Employees are uploading confidential documents into public AI systems, sharing commercially sensitive information in prompts, exposing HR and financial data, pasting source code into third party models, and unknowingly bypassing existing data governance processes.

In many cases, security teams simply do not see it happening.

And if you cannot see it, you cannot control it.

In 2026, secure AI adoption is rapidly becoming one of the most important priorities for cybersecurity leaders.

The challenge is no longer whether employees should use AI.

The challenge is how organisations can enable AI safely, securely, and compliantly without slowing innovation.

AI Tools Are Becoming Standard Workplace Technology

Artificial intelligence has rapidly shifted from experimental technology to everyday business utility.

What began with occasional employee experimentation has evolved into widespread workplace adoption across almost every function of the organisation.

Employees are now using AI tools to draft emails, summarise meetings, analyse spreadsheets, generate reports, write code, review contracts, support customer interactions, and automate repetitive tasks. Whether through ChatGPT, Microsoft Copilot, Claude, Gemini, or industry-specific AI applications, AI is quietly becoming embedded into daily workflows in much the same way email, cloud collaboration, and internet search once did.

For many employees, using AI is no longer viewed as innovation; it is simply the fastest and easiest way to get work done.

The challenge for organisations is that AI adoption is happening far faster than governance. Employees are often introducing AI into workflows without waiting for formal approval, policy updates, or IT sign off. In many cases, business leaders may believe they have sanctioned AI usage through a single approved platform, while employees are simultaneously experimenting with multiple public and private tools outside visibility.

This growing reality of Shadow AI means organisations increasingly risk losing control over where sensitive information is shared, how decisions are being influenced, and what data may already be leaving the business without oversight.

Security Teams Are Struggling to Keep Pace

For cybersecurity teams, the pace of AI adoption has created an entirely new challenge. Traditional security controls were never designed to monitor how employees interact with generative AI applications, particularly when those tools sit outside the corporate environment.

Firewalls, email gateways, endpoint controls, and traditional data loss prevention solutions may detect obvious threats, but they often fail to provide meaningful visibility into what employees are typing into AI prompts, what files are being uploaded, or which platforms are actually being used across the organisation. The result is an expanding blind spot that many security teams are only beginning to recognise.

The reality is that many organisations do not currently know how AI is being used inside their business. Security leaders are increasingly asking difficult questions. Are employees sharing sensitive HR data with public AI tools. Are financial reports being uploaded for analysis. Are legal documents being summarised externally. Is intellectual property being exposed without anyone realising. In many cases, the answer is uncertain.

The challenge for CISOs in 2026 is no longer whether AI will be adopted, because it already has been. The challenge is how to enable AI safely while maintaining visibility, governance, and control without slowing productivity or frustrating employees.

The Most Common AI Applications Employees Use

Artificial intelligence in the workplace is no longer limited to a single platform or vendor. Employees are increasingly using a wide range of AI tools to improve productivity, reduce manual effort, and speed up decision making. While many organisations focus on formally approved solutions, the reality is that employees often experiment with multiple AI applications simultaneously depending on the task they are trying to complete.

A member of the finance team may use one platform to summarise spreadsheets, while a marketer uses another to draft content, and a developer relies on an entirely different system to accelerate coding. The challenge for security teams is that this usage frequently happens without visibility, creating hidden risks around sensitive data, governance, and compliance.

Understanding the most common AI applications employees are already using is essential for organisations looking to secure AI adoption rather than simply react to it. Below are some of the platforms most commonly appearing across enterprise environments, often without formal approval or security oversight.

ChatGPT

ChatGPT has quickly become one of the most widely used workplace AI applications in the world. Employees use it for everything from writing emails and summarising meetings to creating reports, analysing documents, generating ideas, and accelerating research. For many staff members, it has become a digital assistant sitting alongside traditional workplace applications.

However, the risk comes from what employees are entering into prompts. Consider a legal team reviewing contracts under tight deadlines. An employee may upload an entire supplier agreement into ChatGPT and ask for key clauses to be summarised or risks highlighted. A finance professional might upload budget forecasts to identify spending trends.

A construction firm preparing a major bid may paste commercially sensitive tender information into prompts to help create proposal responses more quickly. In each case, the employee is trying to work faster, not create risk, but sensitive information may already be leaving the organisation without oversight.

Microsoft Copilot

Microsoft Copilot is rapidly becoming the preferred enterprise AI platform due to its integration with Microsoft 365, including Outlook, Teams, Excel, Word, and SharePoint. Employees are using Copilot to draft presentations, summarise lengthy email chains, analyse spreadsheets, automate reporting, and improve productivity across daily tasks.

Yet many organisations underestimate the governance challenges that come with this level of integration. AI can surface information far more quickly than employees could previously access manually. For example, an employee asking Copilot to summarise all project risks discussed across Teams and SharePoint could unintentionally surface commercially sensitive information they technically had permission to access but would never have realistically discovered themselves.

Similarly, HR teams may unknowingly expose sensitive personnel information if permissions are poorly managed. AI amplifies both productivity and risk, making identity governance and access controls more important than ever.

Claude

Claude has become increasingly popular among knowledge workers, consultants, legal professionals, and technical teams due to its strong reasoning capabilities and document analysis strengths. Many users favour it for summarising lengthy reports, analysing complex information, and supporting detailed writing tasks.

For example, a law firm associate may upload large legal case files to identify patterns or create concise summaries before client meetings. A cybersecurity analyst could use Claude to interpret technical threat reports or assess incident information more efficiently. While these use cases improve speed and operational efficiency, organisations must still understand what information is being processed externally and whether employees are using enterprise-approved environments or personal accounts.

Gemini

Gemini is becoming increasingly embedded into organisations already operating within Google Workspace environments. Employees commonly use Gemini for drafting content, analysing spreadsheets, supporting research, summarising meetings, and accelerating administrative tasks.

Imagine a sales team preparing for a major client presentation.

An employee may ask Gemini to analyse historical customer communications, identify buying trends, and draft personalised recommendations. In another scenario, an operations team may upload internal planning documents to improve project delivery. While these examples demonstrate clear productivity gains, they also introduce important questions around data residency, model transparency, and organisational control over business information.

Industry Specific AI Tools

One of the biggest misconceptions organisations make is assuming AI usage revolves only around ChatGPT, Copilot, Claude, and Gemini. The reality is significantly broader. Thousands of specialist AI tools are now emerging across almost every industry and business function.

In healthcare, AI is supporting diagnostic analysis and clinical administration. In legal services, specialist platforms review contracts and accelerate due diligence. Construction firms increasingly use AI for project scheduling, bid development, and risk modelling. Financial services organisations rely on AI for fraud analysis, forecasting, compliance reviews, and customer insight generation. Cybersecurity teams themselves are adopting AI to accelerate threat analysis, vulnerability assessments, and incident response.

The challenge is that many of these applications sit completely outside traditional visibility. Employees may sign up using work email addresses, personal credentials, or free versions without involving IT or security teams. For CISOs and compliance leaders, the issue is no longer whether AI tools are being used. The reality is that they already are. The real question is whether your organisation understands which tools employees are using, what data is being entered, and how to enable productivity safely without creating unnecessary risk.

The AI Security Risks Organisations Often Miss

Much of the discussion around artificial intelligence focuses on productivity, automation, and competitive advantage. Far less attention is often given to the security implications quietly emerging beneath the surface.

For many organisations, the biggest challenge is not malicious employees deliberately misusing AI, but well intentioned staff unknowingly creating risk in pursuit of efficiency. In the same way cloud adoption initially outpaced governance, AI adoption is moving significantly faster than most organisations can monitor, secure, or control.

The problem is that many of the risks associated with generative AI are difficult to see through traditional cybersecurity controls. Existing tools were not designed to understand prompt behaviour, monitor AI interactions, assess uploaded content, or determine how external models process information. As a result, organisations are developing blind spots around some of their most valuable data. Below are some of the most common AI security risks businesses frequently underestimate.

Prompt Injection Risks

Prompt injection is becoming one of the most widely discussed emerging risks within AI security. In simple terms, prompt injection occurs when instructions are embedded or manipulated to influence how an AI model behaves, often in ways the user or organisation did not intend. While still evolving, prompt-based attacks are increasingly relevant as businesses integrate AI into workflows, automation, and decision support systems.

Imagine a customer support team using an AI assistant to summarise customer queries or automate responses. If an attacker deliberately embeds hidden instructions within submitted content, the AI may be manipulated into exposing unintended information, changing outputs, or bypassing expected controls. Similarly, employees using AI-generated recommendations without validation may unknowingly trust manipulated or misleading responses.

For security leaders, the concern is broader than just technical exploitation. AI systems increasingly influence business decisions, internal knowledge retrieval, and operational workflows. If outputs can be manipulated, the integrity of decision-making itself becomes a cybersecurity issue. Organisations must begin treating AI interactions with the same level of scrutiny applied to phishing, malware, and social engineering risks.

Sensitive File Uploads

Perhaps the most immediate and underestimated risk involves employees uploading sensitive business information into public or unapproved AI platforms. This often happens innocently. Staff are simply trying to work faster, improve productivity, or solve problems more efficiently.

Consider a HR manager preparing for annual reviews who uploads employee performance documents into an AI platform to summarise common themes. A finance professional may upload a budget forecast to analyse spending patterns. A law firm associate may upload confidential legal agreements for faster review. A construction company preparing a major tender could upload commercially sensitive project documentation to improve proposal writing.

The intention is rarely malicious.

The risk, however, is substantial.

Without proper governance, organisations may unknowingly expose sensitive financial information, personal employee data, intellectual property, customer records, pricing models, legal documents, or commercially confidential information. In regulated sectors such as legal, healthcare, financial services, and government, the consequences could include GDPR breaches, regulatory scrutiny, reputational damage, or contractual violations.

The difficult reality for many organisations is that they often do not know this activity is already happening.

Data Residency Concerns

One of the most important questions security and compliance teams should be asking is simple.

Where is organisational data actually going when employees use AI?

For many businesses, the answer remains unclear.

Different AI providers process, retain, and store information in different ways. Some models may process data within approved geographical regions, while others may involve international transfers or third-party processing arrangements that create regulatory complications.

For UK organisations operating under GDPR, data residency matters significantly. If employees are entering personal information, commercially sensitive records, or customer data into AI systems without understanding how information is processed, organisations may unintentionally introduce compliance risk.

For example, imagine a healthcare organisation using AI to improve operational reporting while staff unknowingly upload patient related information into unapproved platforms. Or a financial services employee sharing sensitive transaction information with a public AI model to speed up analysis. Even where intent is positive, governance failures can quickly become regulatory concerns.

Security leaders increasingly need visibility into which platforms are being used, where data is processed, whether enterprise protections exist, and whether organisational policies align with actual employee behaviour.

Third-Party AI Model Exposure

AI ecosystems are far more complex than many organisations realise. When employees use AI tools, information may not always stay within a single application or provider. Many platforms rely on multiple third-party models, APIs, integrations, or external processing environments behind the scenes.

This creates a growing supply chain style risk that security teams are still learning to manage.

For example, an employee using an AI-powered productivity application may assume information remains inside one trusted environment. In reality, data could be routed through third-party models, external services, or partner technologies to generate responses. Without visibility, organisations may have little understanding of who is processing business information, where it travels, or how it is protected.

This becomes especially concerning when organisations adopt multiple AI applications across departments without central oversight. One team may use approved enterprise platforms, while another relies on free consumer tools using entirely different processing arrangements.

The result is fragmented governance and increased exposure.

For CISOs, this reinforces a simple truth. You cannot secure what you cannot see. Before organisations can safely scale AI adoption, they must first understand which tools are being used, how information flows through them, and where risk is already emerging. Visibility is no longer optional. It is foundational to secure AI adoption.

The Compliance Challenge

For CISOs, compliance leaders, risk professionals, data protection officers, and GRC teams, artificial intelligence introduces a challenge far bigger than productivity alone. The issue is not simply whether employees are using AI, because in most organisations they already are. The real concern is whether the organisation can demonstrate governance, oversight, accountability, and control over how AI is being used, what information is being exposed, and whether usage aligns with regulatory obligations. In 2026, AI governance is rapidly becoming a board-level issue, not just a technology conversation.

For security and compliance teams, this creates an uncomfortable reality. Most existing compliance frameworks were designed before widespread generative AI adoption. Yet regulators increasingly expect organisations to understand how sensitive information flows through digital systems, who has access to it, and what controls exist to prevent misuse.

If employees are independently entering personal information, legal records, customer data, financial information, or confidential intellectual property into AI systems without oversight, organisations may unknowingly create significant regulatory exposure. The challenge for leadership is clear. You cannot govern what you cannot see.

GDPR Concerns

For UK organisations operating under GDPR and data protection obligations, AI introduces immediate concerns around personal data handling, lawful processing, transparency, and international transfers.

Consider a law firm handling highly confidential client matters. A fee earner under pressure to deliver work more efficiently uploads a sensitive legal agreement into a public AI platform to summarise key clauses ahead of a client meeting. Another employee pastes witness statements into an AI application to identify patterns across case documents. Someone in employment law uploads sensitive HR investigation material to accelerate analysis.

The employee believes they are improving productivity.

The organisation may now have a serious compliance issue.

Why?

Because questions immediately arise around where that information has been processed, whether personal data has been transferred internationally, whether appropriate safeguards exist, and whether the organisation has fulfilled its obligations under GDPR.

For law firms in particular, confidentiality is fundamental to trust. Clients expect sensitive legal matters, commercial disputes, mergers, acquisitions, employment investigations, and intellectual property discussions to remain protected at all times. Even perceived misuse of AI involving confidential information can create reputational damage that extends far beyond financial penalties.

The challenge is not hypothetical.

Many organisations are already discovering employees uploading sensitive material into AI systems without realising the regulatory implications.

For CISOs and DPOs, the question increasingly becomes:

Can we prove sensitive information is not being shared inappropriately through AI platforms?

For many organisations today, the answer remains uncertain.

Regulatory Expectations Are Increasing

Beyond GDPR, regulatory scrutiny around AI governance is increasing rapidly.

Financial services firms face expectations from regulators around operational resilience, data governance, third party risk, and explainability. Legal organisations must maintain client confidentiality and professional obligations. Healthcare providers must safeguard patient information. Public sector organisations face increasing pressure to demonstrate responsible use of emerging technology.

The problem is that regulators are rarely interested in good intentions.

They want evidence.

If an incident occurs involving AI misuse, organisations may need to demonstrate:

• Which AI tools employees were using

• Whether usage was approved or unmanaged

• What sensitive information may have been exposed

• Whether controls were in place

• What user guidance existed

• How risks were identified and mitigated.

This creates a difficult challenge for governance teams.

Traditional compliance processes often rely on policies, awareness training, and periodic audits. Yet AI usage changes daily, often hourly. Employees adopt new tools constantly, and many security teams have little visibility into actual behaviour.

Take the example of a UK law firm advising clients on high value mergers and acquisitions. A partner preparing briefing notes asks a public AI system to summarise commercially sensitive due diligence findings. A junior associate later uploads sections of confidential documentation to accelerate legal drafting. Weeks later, leadership becomes aware of widespread AI usage but has no clear understanding of what information was shared, which applications were used, or whether organisational policies were followed.

At that point, governance becomes reactive rather than proactive.

The risk has already materialised.

For CISOs and GRC leaders, this highlights why AI governance can no longer sit solely within IT. It must become part of enterprise risk management, compliance strategy, and executive oversight.

Auditability and Reporting Gaps

Perhaps one of the most overlooked problems in enterprise AI adoption is the lack of auditability.

If a regulator, auditor, client, insurer, or board member asked a simple question today:

"Can you demonstrate how AI is being used across your organisation?"

Could your organisation answer confidently?

For many businesses, the honest answer is no.

Security teams often have limited visibility into:

• Which AI applications are being used

• Who is accessing them

• What prompts employees are entering

• Whether sensitive documents are uploaded

• Which users present the highest risk

• Whether policy violations are occurring.

This creates a significant reporting gap for compliance and governance teams.

Imagine a law firm facing a major client security assessment as part of a panel appointment process. Increasingly, sophisticated clients are beginning to ask direct questions around AI governance.

How do you prevent confidential legal data being exposed through AI tools?

What controls exist over employee AI usage?

Can you monitor and report on AI related risks?

How do you ensure legal confidentiality is maintained?

Without meaningful visibility, many organisations struggle to answer these questions with confidence.

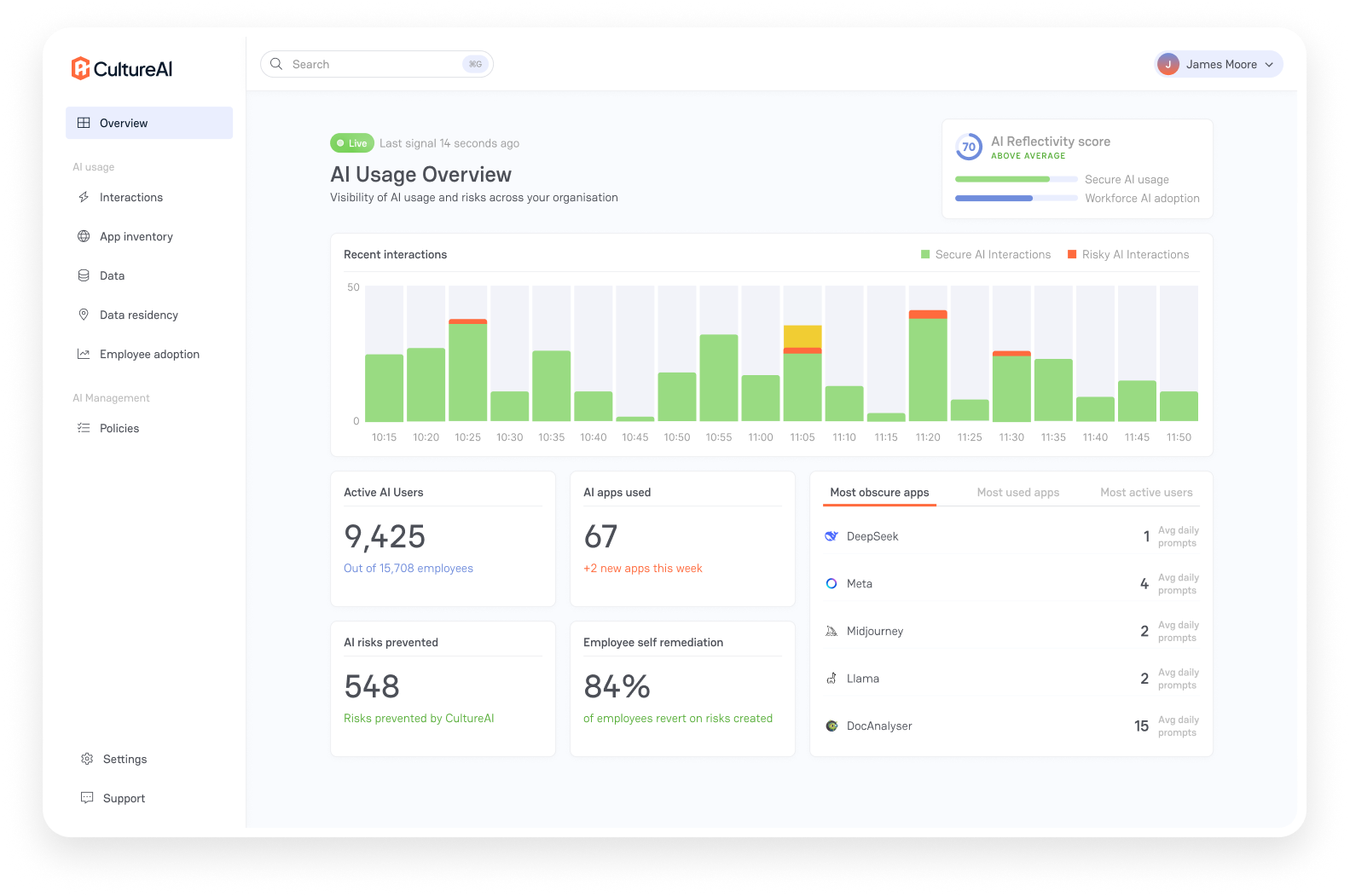

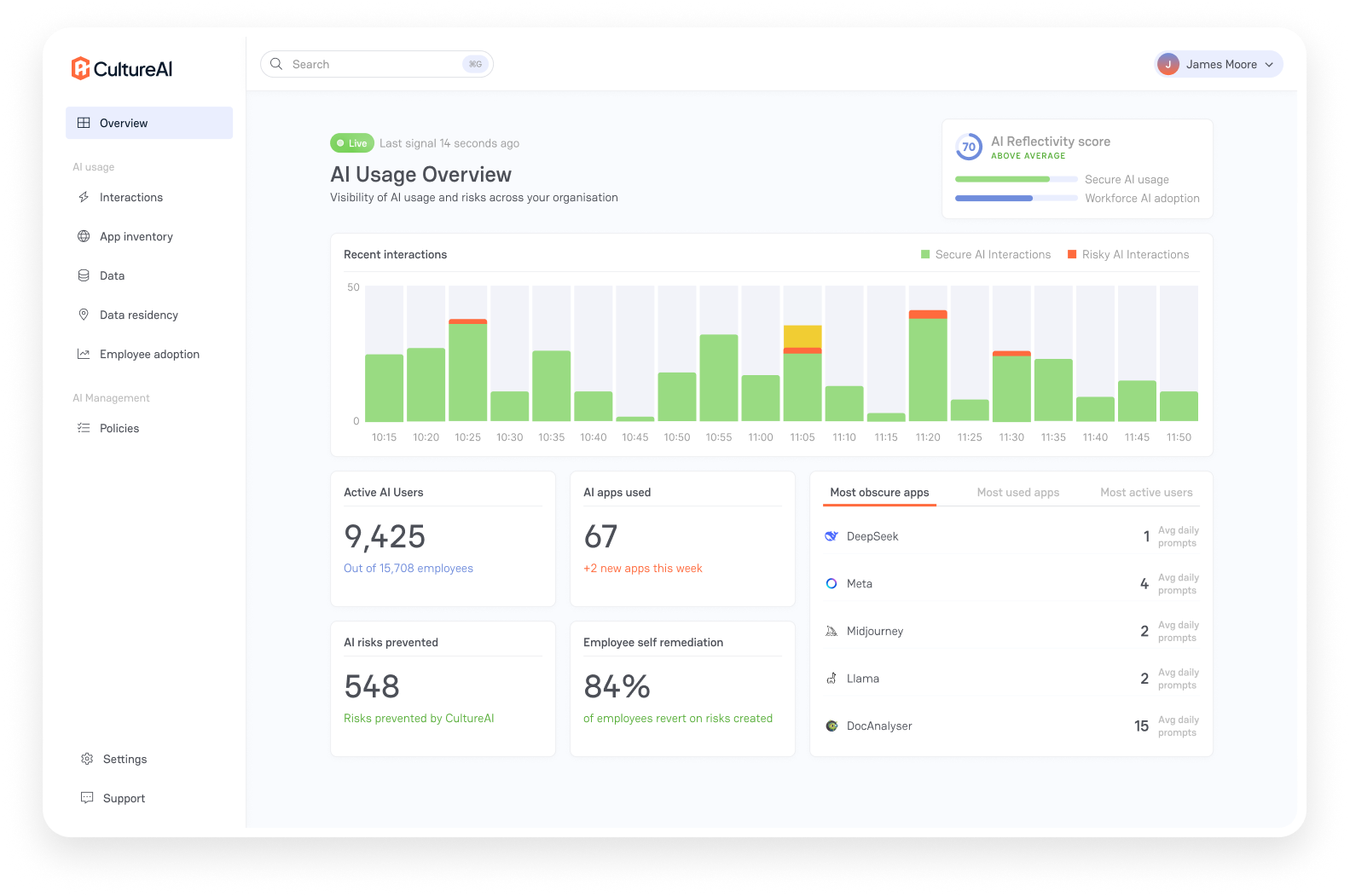

Forward thinking organisations are beginning to address this challenge by introducing real time visibility into employee AI usage, policy enforcement, behavioural intelligence, and reporting capabilities that provide security and compliance teams with a clearer picture of organisational risk.

For example, compliance teams can begin to understand:

• Which departments are using AI most heavily

• Whether sensitive file uploads are occurring

• Which applications are approved or unmanaged

• Where policy risks are emerging

• Which users may require additional guidance or intervention.

This changes the conversation entirely.

Instead of reacting to unknown risks, organisations gain the ability to proactively govern AI adoption while supporting productivity.

For CISOs, compliance professionals, and GRC teams, this is ultimately what secure AI adoption looks like.

Not banning AI.

Not slowing innovation.

But creating an environment where organisations can confidently demonstrate visibility, governance, compliance, and control while employees continue benefiting from the productivity gains AI delivers.

Because in 2026, one reality is becoming increasingly clear.

If employees are already using AI, governance can no longer be optional.

How to Reduce AI Risk Without Slowing Productivity

One of the biggest mistakes organisations make when responding to AI risk is attempting to stop employees from using artificial intelligence altogether. In theory, outright bans may sound sensible. In reality, they rarely work. Employees are already under pressure to move faster, improve efficiency, and deliver more with fewer resources.

If approved tools are unavailable or overly restrictive, staff often find alternative ways to access AI using personal devices, free applications, or unmanaged accounts. This simply pushes AI usage further underground, making risk harder to see and even more difficult to control.

The organisations succeeding with AI in 2026 are not the ones attempting to block innovation. They are the organisations embracing secure AI adoption. They recognise a simple truth. Employees want to use AI because it improves productivity.

The challenge is not whether people should use artificial intelligence, but how organisations can enable it safely, maintain compliance, and reduce security exposure without frustrating employees or slowing business outcomes. The answer lies in governance, visibility, and intelligent controls that support productivity rather than restrict it.

Approved AI Tools

The first step in reducing AI risk is giving employees safe alternatives.

If staff are already turning to public AI tools to improve productivity, organisations should ask themselves an important question.

Have we provided approved options that meet business needs?

Many employees do not intentionally seek risky tools. They simply use what is easiest and most accessible. If approved solutions are unavailable, slow to implement, or difficult to access, staff naturally look elsewhere.

For example, imagine a legal team regularly reviewing large volumes of contracts. Without approved AI capability, employees may turn to free public platforms to summarise clauses and accelerate due diligence work. A finance department under pressure to prepare reports may use unmanaged tools to analyse spreadsheets and create executive summaries. Marketing teams may rely on external AI systems for content generation because internal alternatives do not exist.

The answer is not a restriction.

The answer is enablement.

Leading organisations are increasingly identifying approved AI applications that meet both business and security requirements. These environments often include stronger controls around identity, data protection, auditability, permissions, and governance.

More importantly, employees should know exactly which tools are approved and why.

Clear communication matters.

When staff understand there are safe, supported alternatives available, adoption naturally shifts toward lower risk behaviours.

AI Usage Policies

Traditional acceptable use policies were not written for generative AI.

Many organisations still rely on outdated guidance that says little or nothing about how artificial intelligence should be used in practice.

This creates ambiguity.

Employees are left to make their own decisions around what is acceptable, what information can be entered into prompts, and which platforms are safe to use.

Strong AI governance begins with clear, practical policy.

However, effective AI policies should not simply focus on prohibition.

They should provide clarity.

Employees need to understand:

• Which AI tools are approved

• What business use cases are acceptable

• What information should never be uploaded

• How sensitive data must be handled

• When human review is required

• How AI generated outputs should be validated.

For example, a law firm policy may clearly prohibit client confidential documents from being entered into public AI systems while allowing approved enterprise AI for internal productivity tasks. A financial services organisation may restrict customer financial information from being processed externally but allow secure summarisation of internal operational reporting.

The key is practicality.

Policies that are too restrictive often fail because employees ignore them.

Policies that enable safe usage are significantly more effective.

Most importantly, AI policies should evolve continuously as technology changes. What was acceptable six months ago may no longer align with organisational risk tolerance or regulatory expectations.

Real Time Monitoring

Policies alone are not enough.

One of the biggest challenges organisations face is the difference between what they believe is happening and what employees are actually doing.

Without visibility, governance becomes guesswork.

Many organisations currently have little understanding of:

• Which AI applications employees use

• How frequently AI tools are accessed

• Whether personal or enterprise accounts are involved

• What sensitive information may be exposed

• Which departments carry the greatest risk

This creates dangerous blind spots.

For example, a compliance team may assume only approved enterprise AI tools are being used, while employees are simultaneously accessing dozens of unmanaged applications. A legal department may unknowingly upload highly sensitive information into public AI systems despite internal policy guidance advising otherwise.

Forward-thinking organisations are increasingly introducing real-time visibility into AI usage to address this challenge.

This means understanding:

• Which AI platforms employees access

• When risky behaviours emerge

• Whether sensitive file uploads occur

• Which teams are creating the greatest exposure

• Where policy violations may exist

Importantly, monitoring should not feel punitive.

This is not about surveillance.

It is about understanding risk in the same way organisations monitor phishing exposure, endpoint activity, or insider threats.

You cannot reduce risk you cannot see.

For CISOs and GRC teams, real time visibility becomes essential for governance, compliance reporting, incident response, and executive assurance.

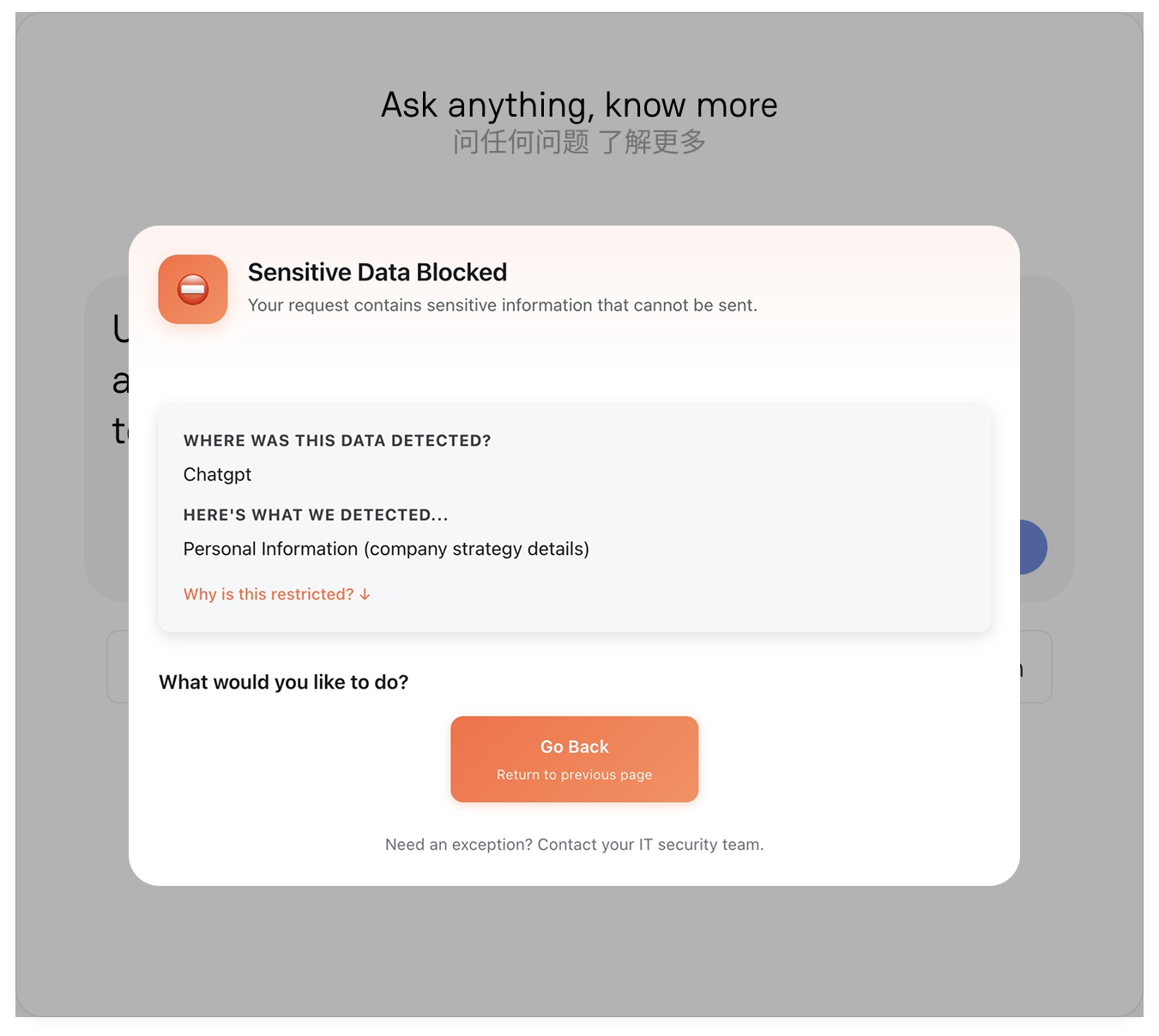

Intelligent User Redirection

Perhaps one of the most effective ways to reduce AI risk without damaging productivity is intelligent user redirection.

Rather than simply blocking employees when risky behaviour occurs, organisations can guide them toward safer alternatives.

Imagine an employee attempts to upload sensitive financial information into an unapproved public AI application.

Instead of a hard block that frustrates productivity, the organisation can provide a contextual warning explaining the risk and redirect the employee toward an approved enterprise environment.

Similarly, if an employee repeatedly accesses unmanaged AI tools, they can receive targeted education explaining safe usage policies and approved alternatives.

This changes employee behaviour without creating friction.

For example, a law firm associate attempting to upload confidential legal documents into a public AI platform may receive an immediate message advising that client confidentiality obligations prohibit this activity and redirecting them toward an approved secure AI environment.

A HR manager working with employee performance data could receive contextual guidance reminding them of GDPR obligations before sensitive data is exposed.

This approach matters because employees rarely respond well to blanket restrictions.

They respond better to enablement.

The goal is not to stop employees using AI.

The goal is to help them use AI safely.

In 2026, organisations that balance productivity with protection will be the ones that succeed. They will understand where AI is being used, provide safe environments for innovation, guide users toward lower risk behaviours, and maintain visibility without slowing business performance.

Because secure AI adoption is not about saying no.

It is about giving employees the confidence to say yes, safely.

Turning Visibility Into Strategic Advantage

While much of the conversation around AI usage focuses on risk, there is also a significant opportunity.

Organisations that gain visibility into AI usage are not just better positioned to manage risk. They are also better positioned to unlock value.

Visibility provides insight into how employees are using AI to improve productivity. It highlights areas where AI is delivering tangible benefits and where additional investment may be justified. It also identifies inefficiencies, duplication, and opportunities for optimisation.

In this sense, visibility becomes a strategic asset.

It enables organisations to move beyond reactive governance and towards proactive optimisation. It allows them to harness AI more effectively, aligning usage with business objectives and driving measurable outcomes.

This is where the real value lies.

Not just in controlling risk, but in enabling smarter, more informed use of AI across the organisation.

What Security Teams Should Prioritise in 2026

For cybersecurity leaders, 2026 represents a turning point in how organisations think about artificial intelligence. The conversation is no longer centred around whether employees should be allowed to use AI. That debate has already passed.

Employees are already using ChatGPT, Microsoft Copilot, Claude, Gemini, and hundreds of specialist AI applications to improve productivity, accelerate workflows, and solve business challenges faster than ever before. The challenge for security teams now is far more strategic.

How do you embrace AI without losing control?

For many CISOs, the answer lies in shifting from reactive security toward intelligent AI governance. Traditional security controls alone are not enough.

Firewalls, endpoint tools, and conventional monitoring platforms were not designed to understand prompt activity, sensitive data exposure through AI, or how employees interact with rapidly evolving generative platforms. In 2026, organisations that succeed will be the ones that create visibility into AI usage, understand employee behaviour, align AI with data protection controls, and provide executive leadership with meaningful insight into risk exposure before problems escalate.

AI Governance

The first priority for security teams must be governance.

AI adoption without governance creates uncertainty, and uncertainty creates risk.

Most organisations already have governance frameworks for cloud services, acceptable use, identity management, and data handling. Artificial intelligence now requires the same level of structure and executive oversight.

The challenge is that AI evolves significantly faster than traditional technology adoption. New applications appear weekly. Employees experiment constantly. Approved environments quickly become mixed with unmanaged platforms, creating a fragmented and difficult-to-govern ecosystem.

For example, imagine a financial services organisation that approves a single enterprise AI platform for employee productivity. Leadership believes governance is under control. Yet across the business, employees continue experimenting with multiple external AI applications to improve efficiency. Some summarise customer information. Others analyse financial reports. A few upload commercially sensitive documentation to accelerate work.

Without governance, leadership operates under false confidence.

Strong AI governance means understanding:

• Which AI applications employees are using

• Which tools are approved or unmanaged

• What types of information are being entered into prompts

• Where organisational risk is emerging

• Whether policies align with real employee behaviour.

Most importantly, governance should enable AI adoption rather than block it.

The organisations leading in 2026 will be those creating structured, secure frameworks where employees can use AI confidently, safely, and within clear organisational boundaries.

Increasingly, this means moving beyond static policy documents toward platforms capable of providing visibility, contextual guidance, policy enforcement, and governance at scale without interrupting productivity.

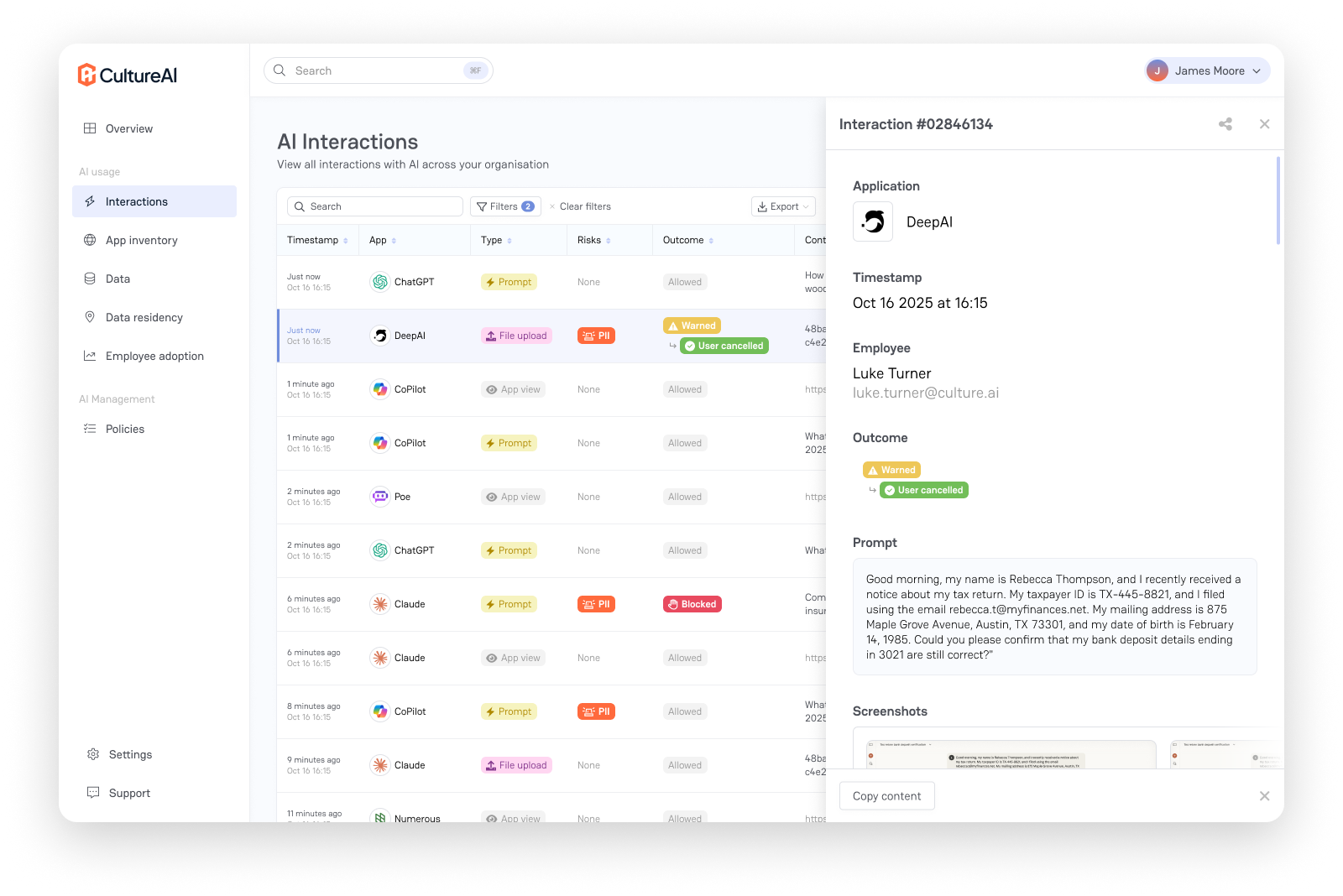

Behavioural Intelligence

One of the biggest blind spots in enterprise AI security is behaviour.

Most organisations focus heavily on applications, but far fewer understand how employees actually interact with AI.

Behavioural intelligence is becoming increasingly important because risk rarely looks the same across every user.

For example, an employee occasionally using an approved AI assistant for email drafting presents very different risk compared to someone regularly uploading HR records, legal contracts, financial information, or intellectual property into public tools.

Understanding behaviour matters.

Security teams increasingly need visibility into:

• Which employees use AI most heavily

• Which departments carry greater risk exposure

• Whether sensitive file uploads are occurring

• How users respond to policy guidance

• Which behaviours indicate elevated risk.

Consider a UK law firm advising clients on high-value mergers and acquisitions. A junior associate begins regularly uploading confidential due diligence documents into public AI tools to speed up legal drafting. Another employee repeatedly accesses unmanaged AI applications outside policy.

Traditional security controls may never flag this behaviour. Behavioural intelligence can.

Rather than reacting after an incident, security teams gain the ability to identify patterns early, understand emerging risk, and intervene before sensitive information is exposed.

Importantly, this should not be viewed as employee surveillance.

The goal is not to catch people out.

The goal is to understand where risk exists and help employees use AI more safely.

Forward-thinking organisations are increasingly introducing intelligent capabilities that identify risky AI behaviours in real time and provide contextual support before exposure occurs. For CISOs, this changes the conversation from reactive incident response to proactive risk reduction.

Data Protection Integration

AI governance cannot exist in isolation.

In 2026, security leaders must ensure artificial intelligence aligns directly with broader data protection strategies.

Because ultimately, AI risk is often data risk.

Employees rarely intend to create security problems. Most issues emerge because sensitive information is handled without understanding the consequences.

A HR employee uploads salary information for analysis.

A finance professional pastes confidential forecasts into an AI tool.

A legal associate uploads privileged documentation to summarise client risks.

A construction firm working on critical infrastructure bids enters commercially sensitive project data into prompts to accelerate proposal development.

The common theme is simple.

Sensitive information is moving faster than governance.

Security teams increasingly need AI controls that work alongside existing data protection programmes, including:

• Data classification policies

• Data loss prevention controls

• Identity and access management

• Insider risk monitoring

• Compliance reporting frameworks.

This integration matters because AI usage cannot become another unmanaged blind spot.

Leading organisations are increasingly implementing intelligent controls capable of recognising when sensitive data interacts with AI systems, applying contextual warnings, and redirecting employees toward safer environments before risk materialises.

For example, if an employee attempts to upload client confidential legal documentation into an unapproved AI platform, data-aware controls can intervene immediately, explain the risk, and recommend approved alternatives.

This enables productivity while preserving security.

Because the future of AI governance is not about saying no.

It is about enabling safe usage in context.

Executive Reporting

Perhaps the biggest shift CISOs must make in 2026 is recognising that AI risk is now a boardroom issue.

Executives are increasingly asking difficult questions:

How is AI being used inside our organisation?

Are employees exposing sensitive information?

What compliance risks exist?

Can we prove governance is in place?

Are we enabling AI safely?

Unfortunately, many security teams still struggle to answer confidently.

This is because traditional reporting was never designed for AI.

Boards increasingly need visibility into:

• AI adoption across the organisation

• High risk behaviours and trends

• Sensitive data exposure risks

• Policy compliance levels

• Departmental risk indicators

• Emerging governance concerns.

Imagine a managing partner at a law firm asking whether confidential client data has ever been exposed through AI tools.

Or a board member asking for assurance that commercially sensitive information is not being entered into unmanaged systems.

Without reporting, CISOs are forced to rely on assumptions.

Executive reporting changes this dynamic entirely.

Rather than anecdotal concerns, leadership gains measurable insight into AI risk across the organisation. They can see adoption trends, understand where risk is emerging, demonstrate governance to regulators and auditors, and make informed decisions around future AI strategy.

Increasingly, organisations are moving toward intelligent reporting environments that provide real time visibility into employee AI usage, behavioural trends, policy adherence, and data protection risks, all translated into language executives and boards can understand.

Because ultimately, secure AI adoption is not just a technical problem.

It is a business resilience issue.

The organisations that thrive in 2026 will not be the ones attempting to stop AI.

They will be the ones that govern it intelligently, understand behaviour, protect sensitive data, and provide leadership with confidence that innovation is happening safely, visibly, and under control.

Secure AI Adoption Requires Visibility and Control

Artificial intelligence is already embedded in the workplace.

Employees are using ChatGPT, Microsoft Copilot, Claude, Gemini, and specialist AI applications to improve productivity, automate tasks, and work faster. The challenge for organisations is no longer whether employees are using AI, because they already are. The real question is whether it is being used securely.

For CISOs, compliance teams, and business leaders, the biggest concern is often not malicious behaviour, but well intentioned employees unknowingly exposing sensitive information. Financial data, HR records, legal documents, intellectual property, and customer information are increasingly being entered into AI tools, often without visibility from security teams.

This is why secure AI adoption requires visibility and control.

Organisations need to understand:

• Which AI tools employees are using

• What sensitive information may be exposed

• Whether approved or unmanaged platforms are being accessed

• How to demonstrate governance and compliance.

Because you cannot secure what you cannot see.

The organisations that succeed in 2026 will not block AI. They will enable it safely, balancing innovation with governance, productivity with protection.

If your organisation cannot confidently explain how AI is being used today, now is the time to act.

Cybergen Security helps organisations gain visibility and control over AI usage, enabling secure and compliant AI adoption without slowing productivity.

Book a Cybergen AI Security Demo to see how your organisation can reduce hidden AI risk and enable employees to use AI safely.

Ready to strengthen your security posture? Contact us today for more information on protecting your business.

Let's get protecting your business

Thank you for contacting us.

We will get back to you as soon as possible.

By submitting this form, you acknowledge that the information you provide will be processed in accordance with our Privacy Policy.

Please try again later.

Cybergen News

Sign up to get industry insights, trends, and more in your inbox.

Contact Us

Thank you for subscribing. It's great to have you in our community.

Please try again later.

SHARE THIS